The disruptor’s press

BigFiveKiller.online: The one-man publishing house aiming to topple the biggest book publishers

Published 14.4.2026 | Photo / Video: AI generated, Freepik

Fred Zimmerman, a publishing veteran and founder of BigFiveKiller.online, is looking to reinvent the publishing world through the power of agentic AI. With a career spanning roles at NASA, the US intelligence community, and LexisNexis, Zimmerman combines a deep technical background with decades of literary experience—having already published over 500 titles through his company, Nimble Books. Now, he is leveraging the massive leaps in AI productivity seen in late 2025 to build a completely in silico publishing pipeline. Is a one-man publishing house capable of taking on the world's largest imprints?

A one-man publishing house aims to take on the Big Five with the help of AI. Is this madness or is there a method to it?

Madness no longer. In December of 2025 there was a stunning step change in programmer productivity using agentic models like Anthropic's Claude Code and ChatGPT's Codex. As documented by deeplearning.ai's The Batch in its year-end review of software development trends, coding agents that use frontier large language models went from completing roughly 14 percent of standardized software engineering tasks in 2024 to routinely completing more than 80 percent of the same tasks by the end of 2025—a roughly six-fold improvement in less than twelve months.[^1] It is now eminently sane to plan on single-handedly building large, complex applications that vertically integrate entire knowledge industries. Indeed, Anthropic has made a habit of doing just that, launching a suite of enterprise agent plug-ins in early 2026 for domains including financial research, HR, and engineering specifications—a direct bid to replace incumbent SaaS products across entire knowledge verticals.[^2] Technology is no longer a barrier to vertical integration in any knowledge domain. The challenges are all in the business and creative side.

"If I can automate one publishing imprint, why can't I automate them all?"

How did the idea come about?

I first became interested in algorithmic publishing when I was working at LexisNexis in the early days of the Web. We were very proud of what was then a huge "data warehouse" of paywalled content, but I didn't think we were doing all we could with it. I started wondering if we could assemble e-books on specific topics across a wide breadth of sources. This idea became a prototype which I returned to a decade later when I realized that 1) I could envisage a completely in silico book publishing pipeline 2) while there were many steps in that pipeline that all required high levels of skill, none of them were completely intractable to algorithmic treatment and 3) I could build it myself. I set out to teach myself to program. Now, a dozen years later, I have built numerous iterations of algorithmic publishing pipeline, each one getting better as the technology and my skills advanced in tandem. The specific idea that became BigFiveKiller.online came to me last year when I realized that generative text technology had finally caught up to my high standards. I asked myself, "If I can automate one publishing imprint, why can't I automate them all?"

Could you tell us a bit about your background in the publishing industry?

My working career began as a writer for a computer magazine before the advent of the IBM PC and can be summarized abstractly as a variety of roles providing premium information services to knowledge workers in the science, legal, government, and library industries. More concretely, I worked for NASA, LexisNexis, the US intelligence community, and Gale/Cengage (the leading library vendor). I also started my own publishing company, Nimble Books, in 2004, and have published more than 500 books on a wide variety of topics, by more than 100 contracted authors, and in a wide variety of experimental formats. Probably more important than all of those experiences, though, is that I am a lifelong book lover with a passionate belief in the essential and transformative power of reading.

„My algorithmic publishing approach can scale to offering high-quality books to virtually all top-level BISAC categories“

A few months ago, Keith Riegert gave an impressive demonstration at the US Book Show of how AI agents can be used to automate entire processes involved in the creation and publication of books. You're starting directly with entire imprints that you want to build up. What, broadly speaking, does your process look like? Perhaps you could explain it using your recently published “Food Shock Atlas” as an example?

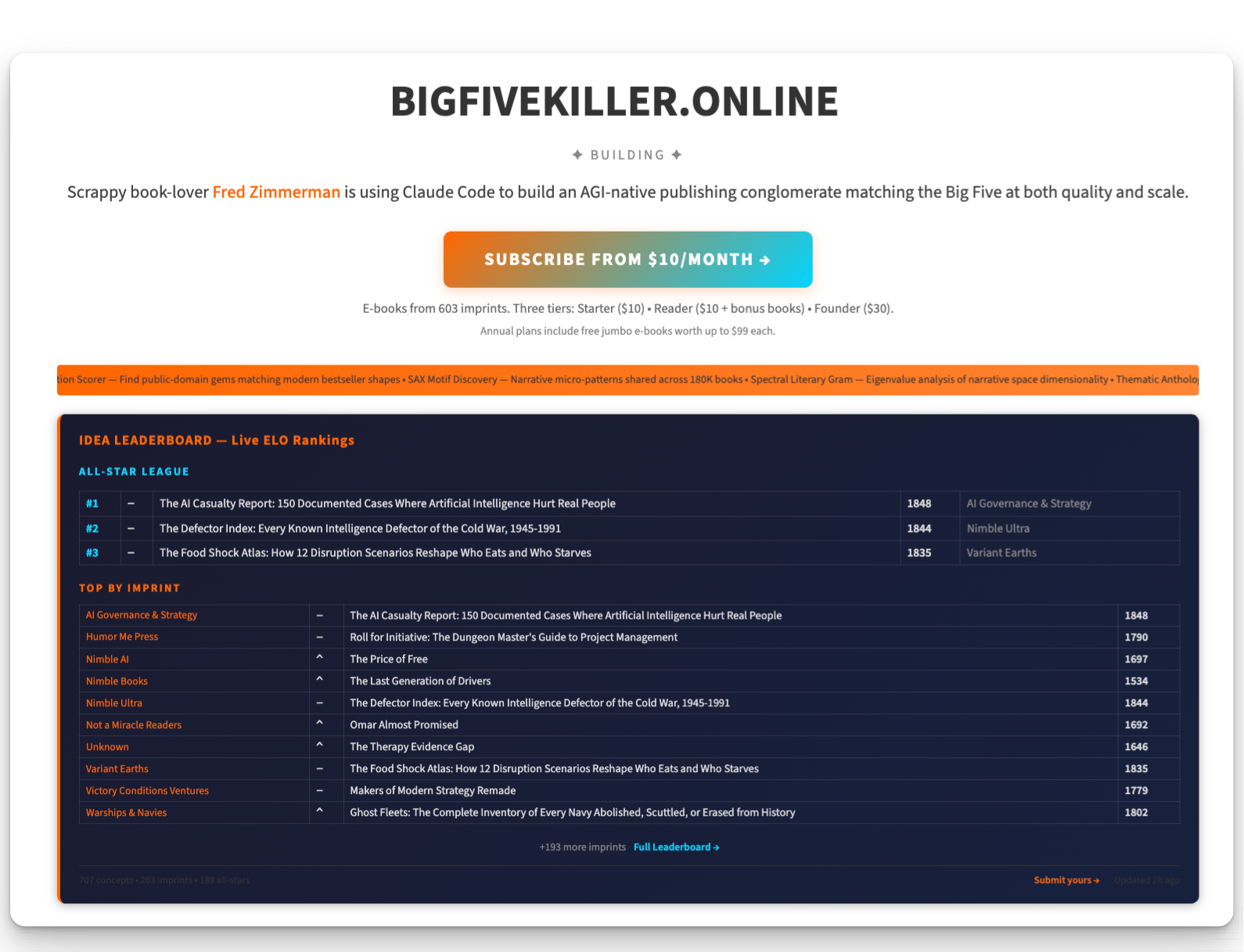

The process is highly automated and begins with what I call autoideation where ideas from a variety of automatic and human sources are fed into a hopper and evaluated by a panel of six to twelve LLM expert judges on factors including revenue potential, feasibility, tractability to AI, production challenges, fit with my values, and so on. The results are ranked and displayed on a leaderboard that uses chess-style ELO rankings. [See the B5K.online leaderboard at https://bigfivekiller.online/leaderboard] The key moment in producing Food Shock Atlas was my decision to trust the evaluation process that, to my surprise, generated this idea on its own, then rated it highest out of a pool of 500 competing candidates. Although I had a bit of knowledge about global food data sets from previous roles, I had no particular interest in publishing anything about food, and certainly wouldn't consider myself expert enough to write on the topic. So allowing the system to commit me to a complex topic in an unfamiliar field was an important challenge to overcome, and an important step forward. Once I gave the greenlight, the system (in this case, Claude Code) zoomed around creating an outline, gathering data sets, writing text, and so forth. I made several important interventions that influenced the final product, e.g. I provided direction about how to create maps, added "a citizen's guides to mitigating food shocks", and so forth. But I did not write the text, and I relied heavily on multiple layers of automated review to reach publication-readiness, including adversarial review from a completely different model. I'm happy with the end result. It is a good-looking book that is a unique and valuable resource on an important topic.

Is your project more of a showcase (of what's possible with AI today) or actually a business case?

My first goal is to prove that my algorithmic publishing approach can scale to offering high-quality books to virtually all top-level BISAC categories, which is the scope served by the Big Five. I say "my approach" because I have my own idiosyncrasies that I bring to the project. To be perfectly candid I am an indifferent businessman. My threshold for real success is to be able to bring books to readers that are every bit as good as those by top-tier human authors. We all know that is a very challenging task, and in many genres it may not even be possible. In my estimation it has not yet been demonstrated that models can write fiction or narrative nonfiction as well as top-tier human authors, and there may be deep reasons for that. So I don't expect this proof of concept and scaling phase to end until an undetermined future time when there have been some truly major advances in the creative capabilities of "AI" systems. At that point, if that happens, and if I am successful in meeting my goals for quality, I will transition from experimentation to business activities such as selling services, developing partnerships, and seeking venture capital.

"AI does have an inherent advantage at creating serviceable prose fast"

What kind of books is AI already suitable for today? What will be possible tomorrow – and where will human intelligence still be necessary the day after tomorrow?

Creating a high-quality book solely with AI is still difficult and is only possible if you pick your spots.

I began a few years ago by enhancing public domain classics and government documents with AI-generated front and back matter. Human-guided AI is good at creating new "parts of the book" such as abstracts, selected passages, glossaries, illustrations, appendices, and indexes. These can add considerably to the utility of older books or modern documents that were created without a full apparatus tailored to modern readers. I like these books–it is fun taking a fresh look at the original material–and AI is well suited to create them quickly and at high quality.

More recently, I have moved into nonfiction niches where AI has unique structural advantages. Today's agentic frontier models are now capable of doing web research to assemble a broad picture of a particular phenomenon. They are also capable of pulling down data from sources such as GitHub and Hugging Face, then writing code to analyze, narrate, and visualize the data. This both gives AI a structural advantage—it would be time consuming and expensive for humans to gather and analyze all this material—and inoculates against hallucination, by forcing the model to begin its prose generation with valid data. And AI can do all this in a matter of a day or two, whereas for human editors, creating a reference book is a massive task that requires outside expertise and a lot of effort.

Similar opportunities for category-redefining new formats can be found in other genres. Last week, I decided to play around with sudoku books, and wound up creating a format that has the unique advantage that all solutions are programmatically verified. The computations to achieve this are substantial and this is a moat against publishers relying on human editorial processes. Crucially, the marginal cost of new books is nearly zero, since sudoku books do not rely on expensive LLMs; if I can establish a foothold, this is self-sustaining at scale.

Another example: this week I was able to create a new imprint, Clearview, for large print books. Clearview relies on data mining of semantic embeddings to identify public domain books that are located in topical spaces that are undersupplied with large print offerings and are adjacent to topical spaces with demonstrated demand. This means that institutional buyers can be confident that Clearview is presenting books to them based on a synoptic, quantitative view of what large print books are most needed and most likely to coincide with demand.

What about fiction?

The same category-redefinition logic applies to fiction, where the best opportunities for AI to create high-quality fiction are in places where AI has inherent structural advantages. At this moment AI narrative fiction does not come anywhere near the magnetic, compelling quality of authentic, insightful, intelligent human authors. It remains to be seen whether that is even possible. But we have already established that AI does have an inherent advantage at creating serviceable prose fast, and entrepreneurial types have been quick to seize the opportunity by cranking out lots of slop.

To be sure, this is nothing new. Sturgeon's law: "90% of everything is crap." Paperback writers as good as Lawrence Block and Donald Westlake cut their teeth by writing a novel every ten days, and their first twenty or fifty weren't that great. But there isn't a market for that kind of purple paperback prose today; it's been supplanted by Kindle romances and (frankly) by first-person-shooter games. So algorithmic publishers must look elsewhere. My theory is that until the quality of model prose improves, the best opportunity for AI fiction is to take advantage of AI's unique proficiencies: breadth, depth, data manipulation. My working strategy is that every fiction imprint should grow into its own Marvel Cinematic Universe: thousands of characters, tens of thousands of stories, all interconnected and constantly reinvented for new readers. This is a "backlist" play of the kind that publishers are used to running.

"Most of the simplification of book design and format has been driven by cost“

It remains to be seen which, if any, of these innovations will stick. The point is that it's easy to do lots of them very fast. A publishing world that has been characterized by slow evolution of formats will suddenly be confronted by a Cambrian explosion of them. Frankly, I think this is a good thing. The history of parts of the book is told in an excellent book of the same name—Keith Houston's The Book: A Cover-to-Cover Exploration of the Most Powerful Object of Our Time (W. W. Norton, 2016)[^3]—and, nutshelling it ruthlessly, it is mostly a story of ornate diversity disappearing to be replaced by friction-reducing simplicity. Title pages used to have ten or twenty sub- and sur-titles, imprints, dedications, and fonts. Now they have one or two. Production-complex footnotes that put the reference on the same #%7!! page as the citation have been replaced by easily flowed and compartmentalized end notes. Instead of six or ten different indexes to a massive multivolume history, we're lucky to get one.

Just my opinion, and not necessarily a widespread one, but I think most of the simplification of book design and format has been driven by cost and is not really to the advantage of immersive readers who like to "sit with" a book. AI may help reverse this unfortunate trend.

How do you assess traditional publishers' approach to AI today?

The industry should and mostly does respect the constraints imposed by practical, structural, ethical, and legal relationships with its stakeholders, especially authors and readers. This results in most companies being "slow followers" at a pace adjusted to company and reader preferences. The problem is that slow following may not be a survival path during an exponential singularity. Anthropic CEO Dario Amodei likes to say that AGI—which he expects to arrive six to twelve months from now—will be like having a million geniuses in a data center. Actually, it's worse than that, because it won't stop at a million: why would it? Today's AI steered by clever individuals can already do a lot of things that human publishers can't. What is going to happen when there are thousands or tens of thousands or millions of creative people building their own Cinematic Universes? It's hard to see how the traditional publishing industry operates normally eighteen months from now. Fear is the appropriate response.

You have a massive volume of books in the pipeline. Can the market even cope with so many additional books? After all, the entire self-publishing market will have already exploded by 2025.

Let's start by looking at the micro picture. today Big Five Killer has about 700 books in its pipeline split over 25 or so imprints. That's roughly 30 books a year for an imprint's audience to absorb. That's fairly typical. In one market that I know well, naval history, the market-leading US Naval Institute Press publishes about 80 titles/year; the more hobby-focused Seaforth publishes around 30; and the prodigiously prolific Osprey publishes 120 or more military and naval history a year. If you add university presses, major trades, and small proprietors, you probably get around 500 reasonably well-done works of naval history a year in the English-speaking world. My interpretation of those numbers is that there's room for someone like me who is focused on publishing high quality works at my current scale. But there probably isn't room for a thousand new naval history books from me, and there isn't room for a thousand people who are trying to build Big Five Killers.

That's a good segue to the big picture, which is sobering: Bowker issued 4 million ISBNs in 2025, and that doesn't even include Kindle editions and other off-ISBN distribution such as Wattpad, Substack, and fanfic. Meanwhile, the prospects for the world of book-buyers are, well, not intoxicating. According to a December 2025 YouGov survey of more than 2,200 U.S. adults, 40 percent of Americans didn't read a single book in 2025.[^4] Another 27 percent read just one to four books for the whole year. Only 19 percent read ten or more books. The median American finished two books; the mean was eight—pulled upward by a small cohort of voracious readers who do the heavy lifting for the whole industry. Put bluntly: roughly 1 in 5 Americans accounts for more than 80 percent of all books read.[^4]

“As we enter the world of AI, everyone in the book publishing industry should be experimenting with new ways to reach young people”

What will happen as more and more books chase a static pool of readers? There are two basic paths publishers can choose: win on merit in an ever-more-crowded market, or make the pool of readers bigger. I've discussed above the reasons why I have confidence that algorithmic publishing will soon begin to "win" more and more categories. But if readers go the way of ballerinas and opera-goers[^7], that would be a hollow and likely unsustainable victory. The world I want is one where everyone who can benefit from the transformational experience of immersive reading does benefit.

That means helping grow the pool of readers. Recent discussion of the "Mississippi Miracle"[^8] has highlighted that success is still possible. Between 2013 and 2024, Mississippi climbed from 49th to 9th in the nation in fourth-grade reading by combining phonics-based instruction, mandatory third-grade retention for struggling readers, and literacy coaches placed in underperforming schools—all without significant increases in per-pupil spending.[^8] Children who fall behind early almost never catch up on their own: a struggling reader at the end of first grade has roughly a 90 percent chance of still struggling in fourth grade. The research on what works is clear—access to books, daily reading aloud in the home, and early phonics instruction are the most powerful variables—yet 61 percent of low-income families have no books at all in their homes[^6]. This is a solvable logistics and values problem: put books in kids' hands and encourage them until they succeed. But this problem is nested inside a bunch of other problems, and so are the solutions. Publishers must bring offerings to market that are capable of surviving an onslaught of competitive audiovisual media as well as social and economic stressors that increasingly crowd out the quiet places needed for longform reading.

It's for this reason that I am developing another new imprint called Not A Miracle Readers that will be aimed at 9-12 yo readers. Navigating middle school is tough and it is filled with exit paths that can drastically shrink young people's future options. So Not a Miracle will have a thematic emphasis on teaching young people how to preserve their optionality as they enter the teen years. My autoideation process liked this idea, and panelist ratings spiked more when I added the tweak of focusing on story lines and settings that are "ripped from the headlines" topics that are very current: ICE, the Iran war, the advent of AGI, back to the Moon. So that's another big part of my answer to "can the publishing world cope?" As we enter the world of AI, everyone in the book publishing industry should be experimenting with new ways to reach young people.

The throughline connecting all of these initiatives—from autoideation to adversarial review to semantically-targeted large print offerings—is that the book, as an object and as a data structure, is becoming something more than it has ever been. For most of publishing history, discoverability meant a placement on a bookstore table or a review in a Sunday supplement. That world is not coming back. The new discoverability is computational: it is about whether a book's metadata, embeddings, and structured content are legible to the systems that increasingly mediate between knowledge and the people who need it. I have been investing heavily in both dimensions—building richer semantic representations of my catalog and ensuring that every book I publish is structured to be not just read but reasoned over.[^9] Frontier language models are rapidly becoming the most voracious and consequential "readers" in existence, and publishers who treat them as mere summarizers or competitors are missing the point entirely. The publishers who thrive will be the ones who understand that a book optimized for a curious, well-informed AI agent—one that can retrieve, synthesize, recommend, and extend—is also a better book for a curious, well-informed human reader. Computability and readability are converging, and that convergence is the most important frontier in publishing today.

[^1]: "Software Developers Used More Versatile AI Agent-Powered Tools to Write Code," The Batch, deeplearning.ai, December 28, 2025. https://www.deeplearning.ai/the-batch/software-developers-used-more-versatile-ai-agent-powered-tools-to-write-code/

[^2]: "Anthropic Launches New Push for Enterprise Agents with Plug-ins for Finance, Engineering, and Design," TechCrunch, February 24, 2026. https://techcrunch.com/2026/02/24/anthropic-launches-new-push-for-enterprise-agents-with-plugins-for-finance-engineering-and-design/

[^3]: Keith Houston, The Book: A Cover-to-Cover Exploration of the Most Powerful Object of Our Time (New York: W. W. Norton, 2016).

[^4]: "Most Americans Didn't Read Many Books in 2025," YouGov, December 31, 2025. https://yougov.com/en-us/articles/53804-most-americans-didnt-read-many-books-in-2025

[^5]: "Child Literacy Statistics United States 2026," Brighterly, 2026. https://brighterly.com/blog/literacy-statistics/

[^6]: Ferst Readers, "Top Literacy Statistics." https://ferstreaders.org/resources/top-literacy-statistics

[^7]:Timothée Chalamet, remarks at Variety/CNN town hall with Matthew McConaughey, University of Texas at Austin, February 2026, as reported in "Timothée Chalamet Dragged by Opera and Ballet Worlds After He Said 'No One Cares' About Them," NBC News, March 8, 2026. https://www.nbcnews.com/pop-culture/pop-culture-news/opera-ballet-worlds-clap-back-timothee-chalamet-said-no-one-cares-rcna262133

[^8]: "Mississippi Miracle," Wikipedia, last modified April 2026. https://en.wikipedia.org/wiki/Mississippi_Miracle. See also Elise Clair, "Mississippi's Literacy Miracle: How Holding Students Back Moved a Whole State Forward," The Daily Economy, February 10, 2026. https://thedailyeconomy.org/article/mississippis-literacy-miracle-how-holding-students-back-moved-a-whole-state-forward/

[^9] Book Discoverability Service. https://bigfivekiller.online/book_discoverability

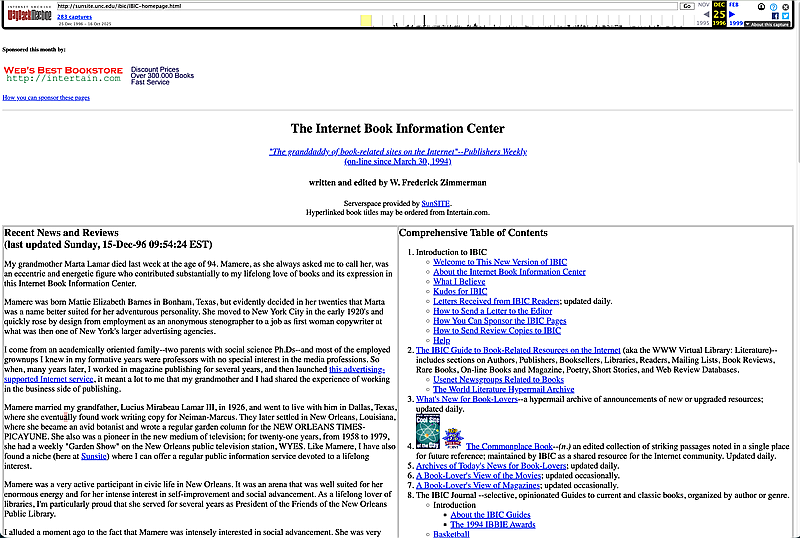

The Internet Book Information Center (1996)

About Fred Zimmerman

Fred Zimmerman is a veteran publishing innovator and technologist who has spent over three decades at the intersection of books and digital evolution. Based in Ann Arbor, Michigan, Fred is recognized as a true internet pioneer, having launched the Internet Book Information Center in March 1994—the first website specifically dedicated to books.

In 2006, he founded Nimble Books, where he experimented with agile publishing formats and "title-surfing" meta-books. After publishing hundreds of titles across diverse genres, Fred pivoted in 2011 toward a radical vision: recreating the entire traditional publishing pipeline in silico.